ISPRS WG II/6

Cultural Heritage Data Acquisition and Processing

Mission

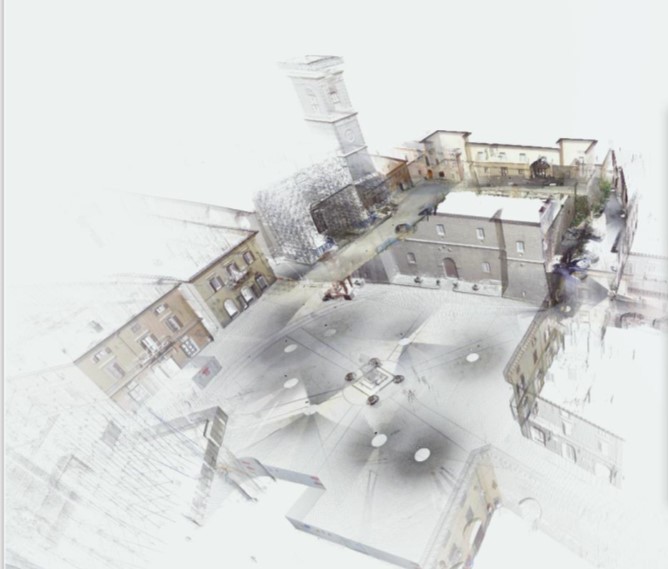

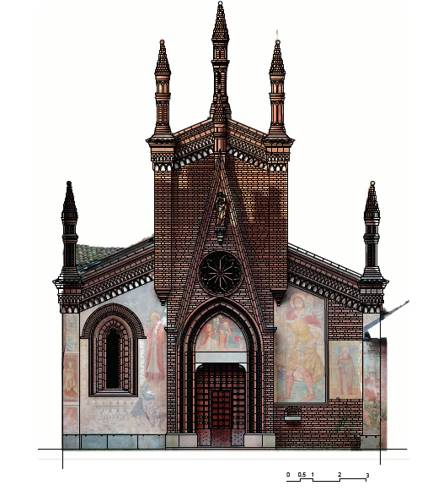

The main objective of the ISPRS Working Group II/6 is to promote state-of-the-art measurement and characterization techniques of cultural heritage assets. We also aim to generate innovative metric documentation strategies that contribute to the enrichment of the scientific community and international organisations committed to the preservation and maintenance of humanity’s legacy. To this aim, the working group promotes not only multi-data source integration and techniques that seek to remotely study cultural heritage scenarios (architectural elements, archaeological scenarios, natural landscapes, etc.), objects/artifacts and industrial heritage (buildings, monuments, excavations, etc.), but also the latest metric documentation techniques and multi-temporal studies of these types of scenarios as well as modern techniques for 3D cultural heritage data management such as HBIM (Heritage Building Information Models)

This working group is committed to innovation, automation, and accuracy in the digital documentation of cultural heritage. In addition, the group aims at collaborating with international groups closely related to the ISPRS that address this mission, actively participating and organising events to exchange and transmit this technical knowledge about heritage documentation. CIPA Heritage Documentation, as a joint society under ISPRS and ICOMOS, is one of the main venues of collaboration for this WG.

Working Group Officers | ||

Chair | ||

| Susana Del Pozo TIDOP Research Unit Higher Polytechnic School of Avila Caleros ovens, 50. University of Salamanca Avila SPAIN +34 663 170597

| |

Co-Chair | ||

| Fulvio Rinaudo Laboratory “Geomatics for Cultural Heritage” (G4CH) Department of Architecture & Design Politecnico di Torino Viale A. Mattioli, 39, 10125 Torino ITALY +39 331 6714787

| |

Co-Chair | ||

| Charalampos Georgiadis School of Civil Engineering The Aristotle University of Thessaloniki Univ. Box 465, GR 54124 Thessaloniki GREECE +30 2310 996171

| |

Secretary | ||

| Lorenzo Teppati Losé Laboratory “Geomatics for Cultural Heritage” (G4CH) Department of Architecture & Design Politecnico di Torino Viale A. Mattioli, 39, 10125 Torino ITALY +39 3460171863

| |

Supporters | ||

Supporter | ||

| Arnadi Dhestaratri Murtiyoso INSA STRASBOURG 24 Bld de la Victoire Strasbourg FRANCE

| |

Terms of Reference

- Innovative strategies and solutions in the collection and processing of data for the documentation of cultural heritage

- Automation strategies in data processing

- Multi-source data fusion

- Remote sensing in cultural heritage

- Cultural heritage monitoring

- Metrics and accuracy in cultural heritage

- Cultural heritage modelling towards HBIM

- Virtual and augmented reality

- Open-source promotion

- Low-cost merging strategies

- Cultural heritage data acquisition standards

- Establishment of good practice protocols

WG II/6